Building our own Duplex Phone Screen in JavaScript

Night Mode

Robocalls and Telemarketers. They've been working hard to make our phones useless, calling us multiple times per day and taking away our precious attention. The sad truth is that many people can't just stop answering calls - amongst all that marketing noise, there are some calls that are too important to ignore. Like a new client reaching out or your doctor calling with urgent news.

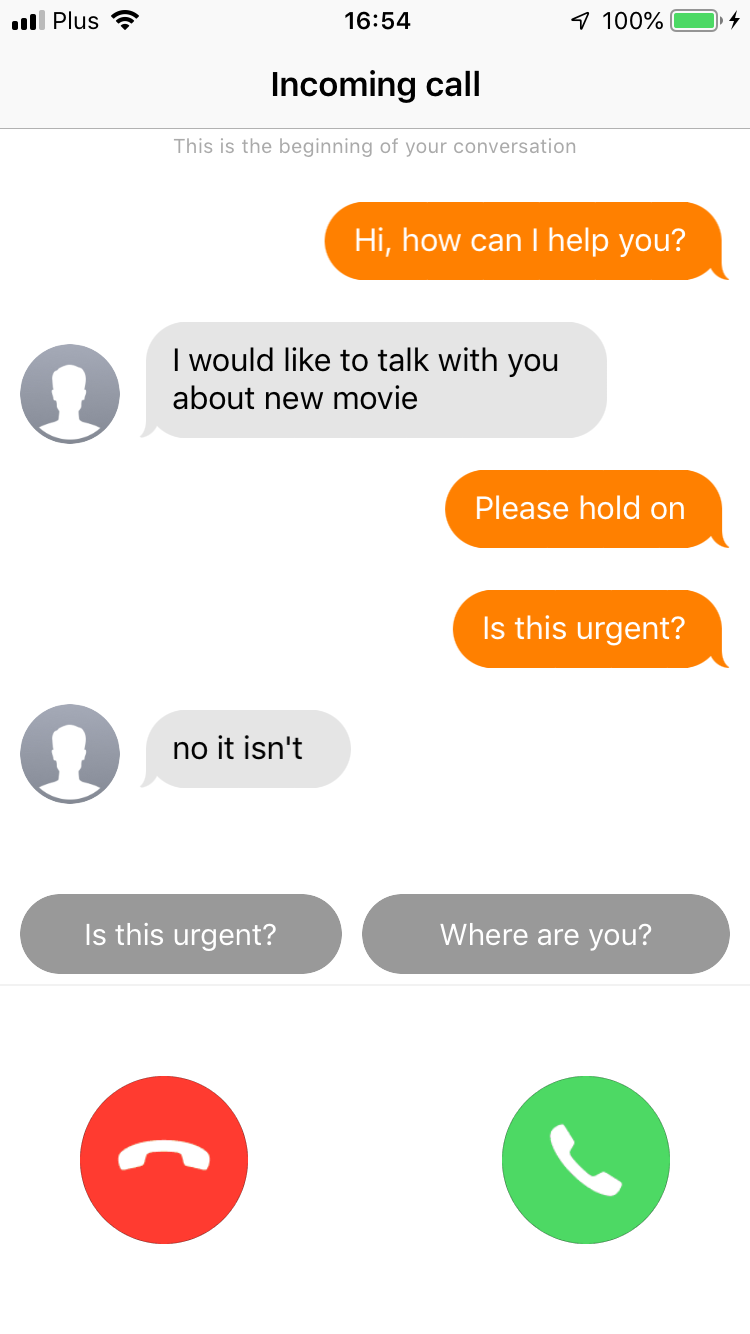

Last fall Google came to our aid and announced a really interesting feature called Duplex Phone Screen. In short - it lets you push calls from unknown numbers through a screening done by a voice bot. You don't have to pick up the phone to talk to them - you can simply look at the live transcript and decide whether the call is worth your time.

The disappointing part? It's only available on latest Pixel phones with US sim cards. Not much luck there if you're an iOS user. The feature is also fairly limited - many users complain about the bot's introduction that's too long and can scare away people you'd actually want to talk to.

Luckily for us, we know how to code. Bear with me and build one yourself

The technology is here

The recent advancements in technology brought a few interesting building blocks that we can make use of.

Cloud-based call center technologies, that allow us to handle calls programmatically

- ASR - for getting accurate transcripts in real time

- NLP - for extracting valuable metadata from the transcript. For example, we could automatically detect callers name and company based on how they introduce themselves

- Speech Synthesis - for responding to the caller, for example when there are follow-up questions.

What are we be building?

Let's start with a simple proof of concept that interviews callers and pushes live transcripts to your phone. Check out the working demo on the video below

In later parts, we'll also add entity extraction to highlight the most important information.

How it works?

Due to API limitations of iOS, we cannot intercept incoming phone calls. To work around that, we'll use a phone gateway service that will generate a new phone number for us. This is the phone number that you can give away freely, all calls made to it will go through a voice bot screening first.

Every time someone calls this number we will receive a notification on our phone. Clicking the notification will open the app will real time transcription. It will also let us send follow-up questions and decide to answer or decline the call.

Let's get to work!

The Plan

- Create phone gateway

- Find a service that would support text to speach (TTS) and speach to text (STT) features

- Connect phone number to the service.

- Build a simple mobile app

- Send transcribed messages back and forth and allow for call transferring

Architecture

To achieve our goal we will need:

API

An API will hold all the state of the call and forward messages and decisions to mobile app. We'll cover implementation details in later posts, but it will be fairly simple.

GET /transcript -> {"transcripts"[{"text":"Hi!","sender":"me","id":"61840"}]}

POST /transcript - merge new transcript messages (on their id)

GET /call_status -> {status: "accepted"}

POST /call_status - set status to one of 'accepted', 'declined'

DELETE /clear - restore state to default

Phone Gateway service

We're going to use VoxImplant for handling inbound calls. Beyond handling inbound calls, it also provides services like ASR and TTS that will be useful for interacting with callers.

Setup

- Create the account

- Create new application and open it

- Buy and attach the new number to the app (Numbers tab, left menu)

- Create a new Scenario (Scenarios tab)

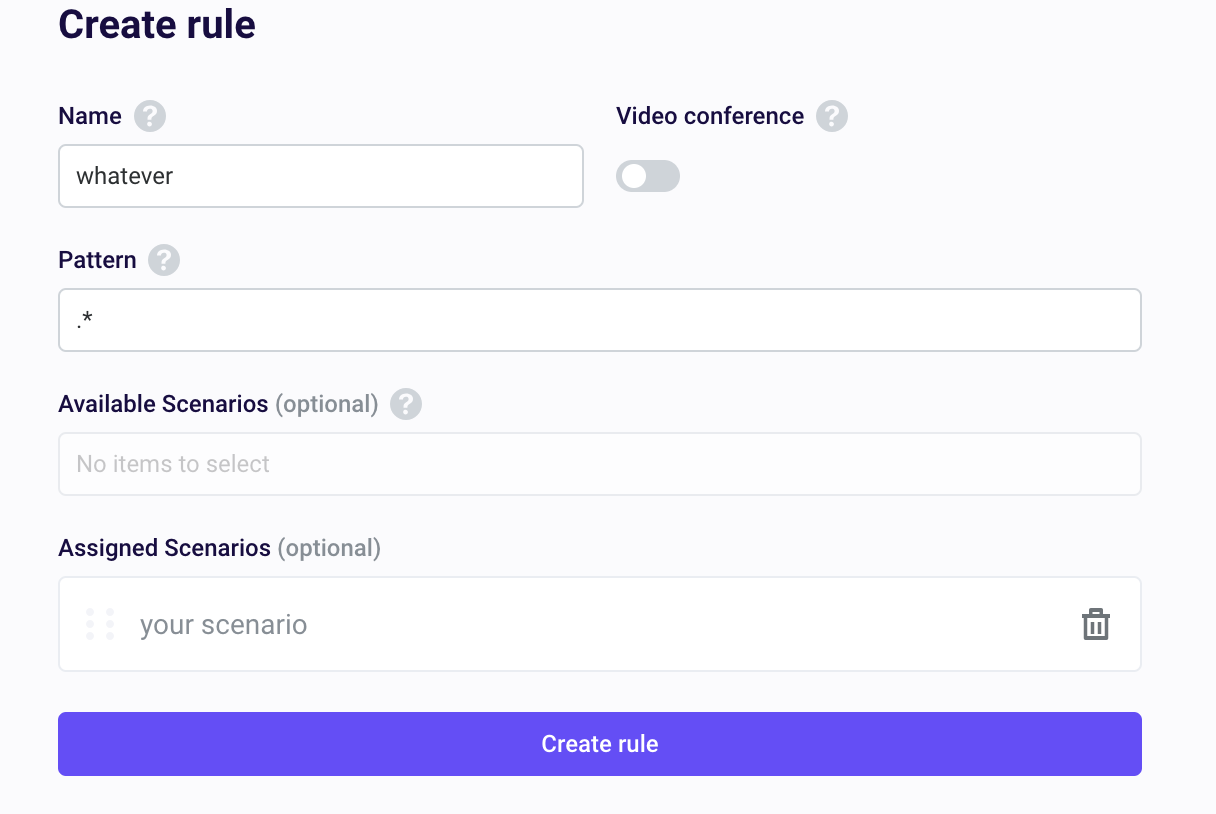

- Create a new routing rule (Routing tab)

Scenario

After we are done with the setup it is the time to write some code that the call will invoke.

2. Add this to answer the call. Test it:

let call = null

VoxEngine.addEventListener(AppEvents.CallAlerting, (e) => {

call = e.call

call.addEventListener(CallEvents.Connected, onCallConnected)

call.addEventListener(CallEvents.Disconnected, VoxEngine.terminate)

call.answer()

})

function onCallConnected(e) {

call.say("Hello!")

}3. Use the ASR module to transcribe speech to text. At this point, the app should repeat your words.

SPEECH_STOP_TIME = 1000 says that you have to stop speaking for a second to detect the end of the last sentence.

require(Modules.ASR)

let SPEECH_STOP_TIME = 1000

let transcriptText = ""

let ts = null

const asr = VoxEngine.createASR(ASRLanguage.ENGLISH_US)

asr.addEventListener(ASREvents.Result, e => {

transcriptText += (e.text + " ")

ts = setTimeout(onSpeakingStopped, SPEECH_STOP_TIME)

});

asr.addEventListener(ASREvents.SpeechCaptured, () => { })

asr.addEventListener(ASREvents.CaptureStarted, () => { clearTimeout(ts) })

function onCallConnected(e) {

call.say("Hello!")

call.addEventListener(CallEvents.PlaybackFinished, () => {

call.sendMediaTo(asr)

})

}

function onSpeakingStopped() {

call.say(transcriptText)

transcriptText = ""

}

3. Send our transcript to the API.

Remember to set webhookEndpoint

const webhookEndpoint = "https://yourapiaddress.com"

const webhookTranscriptEndpoint = webhookEndpoint + "/transcript"

let transcript = []

function onSpeakingStopped() {

call.say(transcriptText)

storeMessage(transcriptText, 'caller')

transcriptText = ""

sendTranscript()

}

function storeMessage(text, sender) {

transcript.push({

text: text,

sender: sender,

id: uuidgen()

})

}

function sendTranscript() {

const data = {

transcript: transcript

}

const postData = JSON.stringify(data)

Net.httpRequest(webhookTranscriptEndpoint, () => { }, {

method: "POST",

postData: postData,

headers: ["Content-Type: application/json;charset=utf-8"]

})

}4. Clear data on new call and get incoming messages.

answerPoolingInterval tells us how often we would like to ask our API for new data because we can't use webhooks here.

getNewTranscript fetches and merges from API only new messages

readMessages thanks to TTS reads out loud new Messages one by one

const webhookClearStatusEndpoint = webhookEndpoint + "/clear"

const answerPoolingInterval = 2000

let messagesToRead = []

function onCallConnected(e) {

clearState()

call.say("Hello!")

call.addEventListener(CallEvents.PlaybackFinished, () => {

call.sendMediaTo(asr)

})

}

function clearState() {

Net.httpRequest(

webhookClearStatusEndpoint,

() => {

setTimeout(getNewTranscript, answerPoolingInterval)

readMessages()

},

{ method: "DELETE" }

)

}

function getNewTranscript() {

Net.httpRequest(webhookTranscriptEndpoint, (response) => {

const result = JSON.parse(response.text)

newMessages = result

.transcripts

.filter(message => messageIsNew(message))

transcript = transcript.concat(newMessages)

messagesToRead = messagesToRead.concat(newMessages)

})

setTimeout(getNewTranscript, answerPoolingInterval)

}

function messageIsNew(newMessage) {

const old = transcript.filter(

message => message.id == newMessage.id

).length == 0

return old;

}

function readMessages() {

if (messagesToRead.length > 0) {

const message = messagesToRead.pop()

call.say(message.text)

call.addEventListener(CallEvents.PlaybackFinished, () => {

readMessages()

})

} else {

setTimeout(readMessages, 300)

}

}5. The only thing left is to transfer the call on decision accepted

Set callerId to verified number on VoxImplant. (Settings/Caller IDs)

Set transferTo to the number that you would like to be transferred to

const callerId = "verified number"

const transferTo = "number to call"

const webhookDecisionEndpoint = webhookEndpoint + "/call_status"

function clearState() {

Net.httpRequest(

webhookClearStatusEndpoint,

() => {

+ setTimeout(getDecision, answerPoolingInterval)

setTimeout(getNewTranscript, answerPoolingInterval)

readMessages()

},

{ method: "DELETE" }

)

}

function getDecision() {

Net.httpRequest(webhookDecisionEndpoint, (response) => {

Logger.write(response.text)

const result = JSON.parse(response.text)

if (result.status === "accepted") {

transferCall()

asr.stop()

} else if (result.status === "declined") {

terminateCall()

asr.stop()

} else {

setTimeout(getDecision, answerPoolingInterval)

}

})

}

function transferCall() {

let outgoingCall = VoxEngine.callPSTN(transferTo, callerId)

call.addEventListener(CallEvents.PlaybackFinished, () => {

VoxEngine.easyProcess(call, outgoingCall)

})

}

function terminateCall() {

call.addEventListener(CallEvents.PlaybackFinished, () => {

VoxEngine.terminate()

})

}Summary

Here it is! We have the core of our "Phone Screen" app in place.

What's left?

Building a simple backend for managing phone call state

Building an iOS app to control phone screen process

Enhance the experience with NLP

Stay tuned for next posts.

What we've built is only an introduction to a more practical use case - for example for filtering call center phone calls.

For years companies have been using IVRs for that, but they provide very bad user experience for the customer. By incorporating ASR and NLP technologies, we're able to deliver more natural experience to customers. Given that at average 80% of questions addressed to call center agents are simple and repetitive, we can also automate a large part of this process and reduce the need for human on the other end of the line.

You can only imagine interesting ideas that could be built upon our app. Recording and replaying answers for the same questions. Automatic suggestions with AI on the backend. Gentle do not disturb mode that would gracefully ask your callers about intentions and notify you only if something big happened. But that's the future...

Explore More Blog Posts

Announcing Enthusiast 1.4: AI Agents Meet E-commerce Workflows

We tend to think of AI as operating on clean, structured data. E-commerce teams know better: most of their information comes as PDFs from vendors, spreadsheets with inconsistent formatting, or scanned purchase notes from clients. Embedding these unstructured sources into automated workflows has long been a challenge. With the latest release of Enthusiast 1.4, we’re closing that gap.

The AI Design Gap: Moving Beyond One-Way Generation

There are plenty of tools capable of generating code from designs or directly from prompts. In theory, this looks like a dream scenario. It drastically shortens the journey from design to frontend development. The handoff is quicker, the design is easier to implement, and everyone involved is happier. Right?

Migrating an ERP-Driven Storefront to Solidus Using a Message-Broker Architecture

Modern e-commerce platforms increasingly rely on modular, API-driven components. ERPs… do not. They are deterministic, slow-moving systems built around the idea that consistency matters more than speed.