Lessons From Designing Mixed Reality Experiences for AR Glasses

Night Mode

Intro

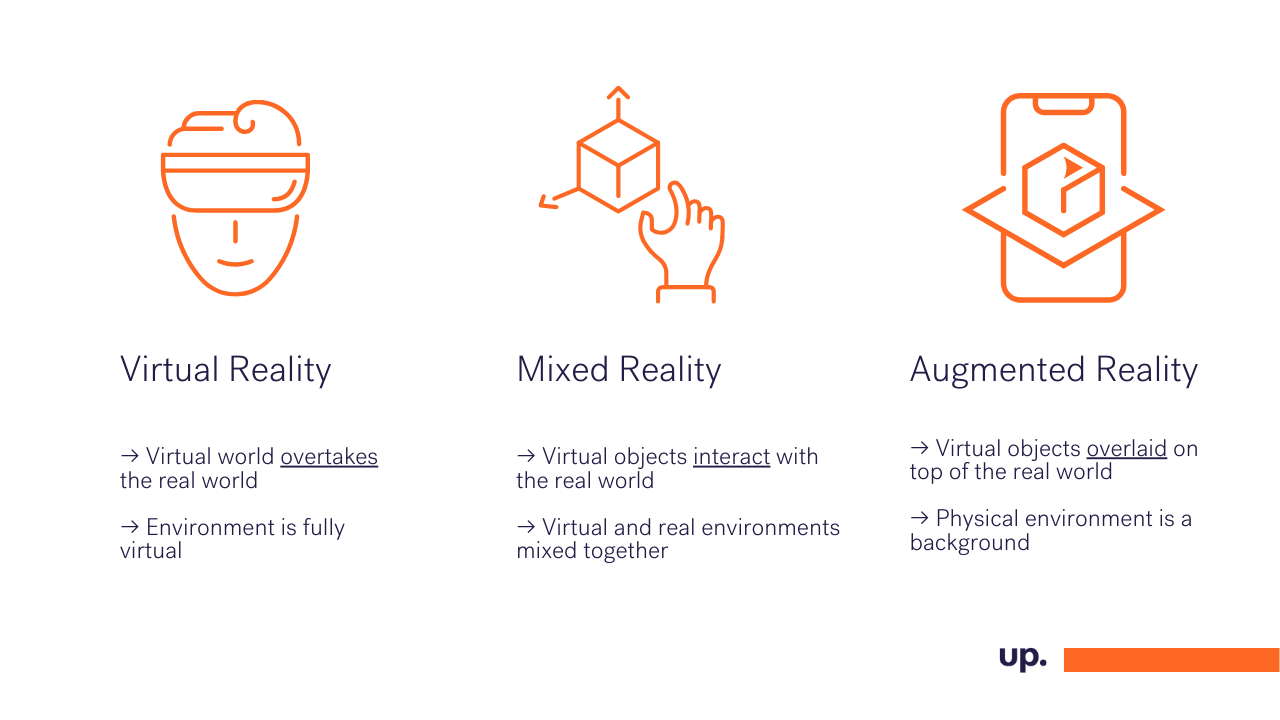

What are the differences between VR, AR, and MR?

TBC Virtual Reality

Augmented Reality

Mixed Reality

Redefining Mixed Reality Gaming

From Mobile Experience to Mixed Reality Glasses

The Great Design Challenges

How To Create A Good Pointer?

How To Create A Non-interfering 2D User Interface?

How To Provide Feedback To The Players?

Lessons From Designing Mixed Reality solutions

Attempts to blend the physical world with digital experiences have never been more advanced than they are now - with broader access to devices such as AR Glasses and growing technologies like 5G and Edge Computing, digital experiences are here to stay.

In a published at the beginning of 2020 report Tech Trends by Deloitte, digital experiences (including Augmented Reality, Virtual Reality, Mixed Reality, and immersive technologies) are named one of the major disruptors of the 20s aiming to enter manufacturing, healthcare, and entertainment to provide a whole new level of interacting with technology.

Let's take a closer look in what digital experiences really are.

What are the differences between VR, AR, and MR?

Extended Reality (XR) is the umbrella term that describes the range of digital experiences based on the degree of virtuality presented to the user and the level of its interaction with the physical world.

Virtual Reality

The essence of VR is full immersion in a virtual world. Whenever a user put a VR headset on, all they can see is a computer-generated ecosystem displayed on the screen inside. They can interact with the virtual environment using head movements and controllers held in hands that can detect motions and button clicks.

What's important is that VR is the most mature of Extended Reality technologies and allows designers to have the most control over what the user sees and experiences - due to full immersion in a digital experience that's fairly easy to control. Because of that, it has become popular in employee training (especially for high-risk jobs) to simulate conditions or unique training gear.

However, VR is most commonly used in gaming where game designers are providing users with more and more interactive ecosystems. Notable titles include "Half-Life Alyx: that allows players to have sophicticated interations with digital objects e.g. playing virtual piano, writing on the board or... juggling.

XXXXXXXXXXXXXXX

https://youtu.be/FRoJpmez1po

XXXXXXXXXXXXXXX

Augmented Reality

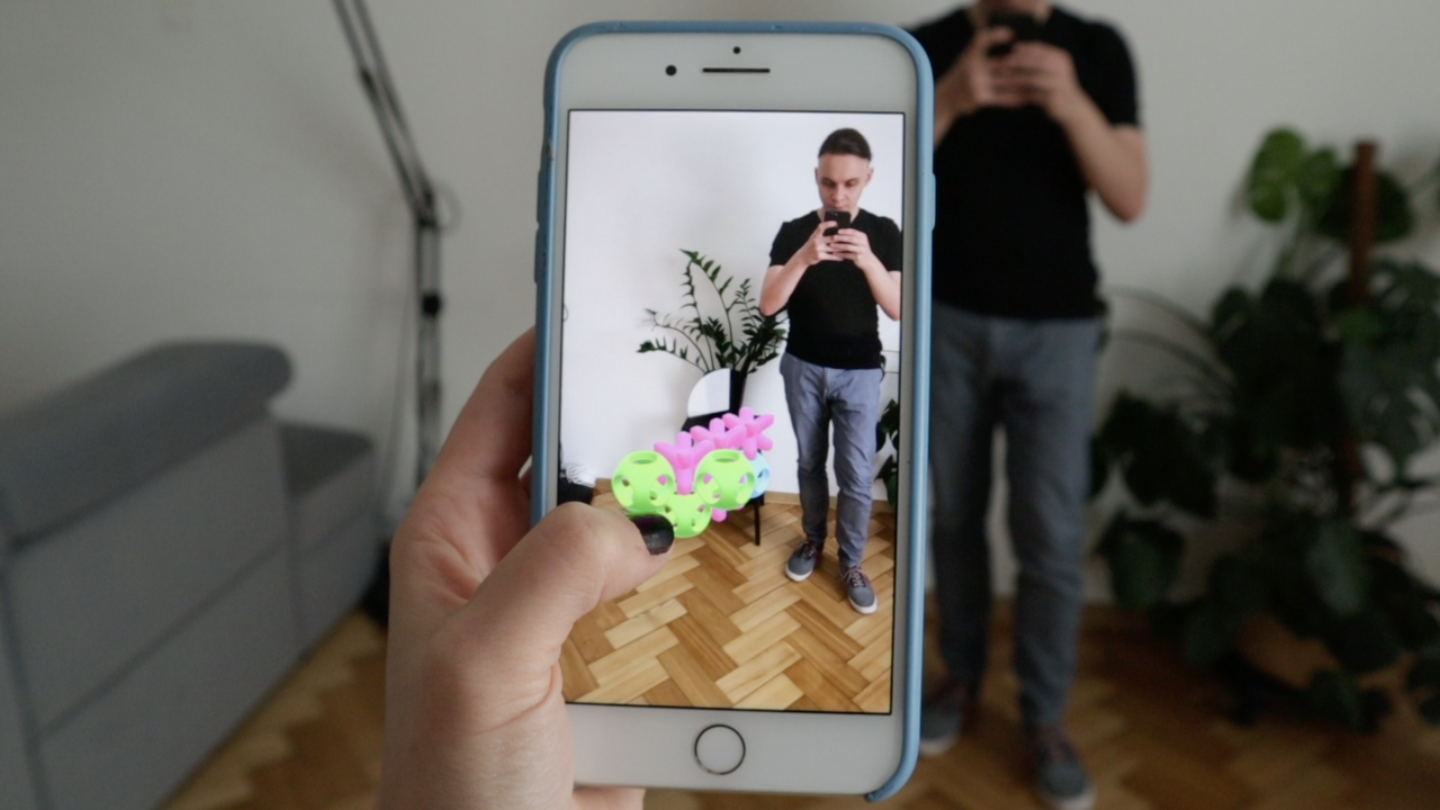

In opposition to Virtual Reality, where everything is computer-generated, Augmented Reality is all about overlaying virtual objects on top of the real world. AR became immensely popular in 2016 with the debut of Pokemon GO.

AR, like VR, is a mature technology being used mostly in entertainment - especially gaming and filters on Instagram and Snapchat. It is also gaining constant traction in e-commerce as a tool to preview items before purchase or virtual fitting rooms.

Early successes have been proved by IKEA that launched its AR app called IKEA Space two years ago, to help their customers is measuring spaces and virtually placing pieces of furniture there.

Interesting data on the usage of AR in e-commerce has been presented recently by Digi Capital. In its long-term forecast, it predicts AR/VR market to reach around $65 billion revenue by 2024 with the next two years to be impacted by COVID-19 related factors including e.g. physical lockdowns and bricks-and-mortar retail closures. AR might be the easiest and the most feasible way to showcase products to customers in the coming years.

Mixed Reality

Mixed Reality is all about interweaving the physical and virtual worlds. You can think of it as AR on steroids. In MR, virtual objects remain anchored in the real environment, and users can interact with them as if they were real.

MR has the broadest range of powerful use-cases: imagine a firefighter equipped with Iron Man's technology. They could see a detailed map of the building that's on fire with heatmaps and other information that would allow taking a faster and more informed action. Or, as in this example by Microsoft, being able to design and interact with digital exhibition:

It is, however, the newest and least mature of the three mentioned Extended Reality technologies. Current Mixed Reality solutions are mostly experimental and very expensive, which makes it difficult to widely adapt. The most popular and production-ready MR devices include Microsoft HoloLens and glasses by Nreal. However, judging by the number of information leaks, we're facing the era of less complex and more affordable MR glasses, which will make it commonplace.

When creating Mixed Reality solutions, the role of experience designers is essential. User experience consists of a lot of interactions, namely between users and the environment, users and virtual objects, and virtual objects and the environment. Nailing all of this allows creating immersive user experiences that keep users engaged.

Redefining Mixed Reality Gaming

Staying at the edge of new technologies, we started to experiment with Mixed Reality early on. When our technology team was presented with a challenge of creating an MR gaming experience an idea of an interactive, multiplayer, and real-time game was born. With a decision to redefine the classic concept of Tic-Tac-Toe, XO came to life.

XO is a game that brings Tic-Tac-Toe from its 2D form on paper to digital space in 3D. It follows a few simple rules:

→ It's in 3D Extended Reality and with an infinite board.

→ There are no turns, and you can place an emblem (pink X or green O) every 2 seconds.

→ You can place emblems only on the sides (that includes the top and bottom) of existing emblems.

→ The game starts with a neutral blue emblem in the middle.

→ You have to have five emblems in a row to win (including diagonals).

Even though the rules are simple, the game gets very fast-paced and competitive once both players get the hang of it.

The mobile version of XO uses Augmented Reality technology both on Android (ARCore) and iOS (ARKit) and utilizes Edge Computing for a snappy multiplayer experience.

From Mobile Experience to Mixed Reality Glasses

The mobile version of XO gathered a lot of positive feedback when showcased during international conferences such as Bright Day in the Netherlands where it was showcased together with T-Mobile, MobiledgeX, and OPPO, so when the interest in porting the game into extended reality glasses appeared we couldn't refuse! Our team was lucky enough to be working with one of the early versions of ThinkReality MR glasses by Lenovo.

Let's stop here to take a closer look on the glasses itself. Usually the MR glasses consist of three major parts:

→ Transparent glasses that collect data from multiple sensors (camera, accelerometer, gyroscope) and show virtual overlays to the user. The overlays are displayed directly on the glass surface of the glasses.

→ Computing unit that analyzes collected data, communicates with external services, and prepares what to show to the user.

→ Controller that gathers button actions from the user and can be used to provide haptic feedback.

When we started the development, we were concerned the most about the technical difficulties of using experimental APIs and hardware that is not that straightforward. To our astonishment, the hardware was great to work with, but the toughest part was ensuring that players have a great experience.

The Great Design Challenges

I've already mentioned that, but let me repeat: When creating Mixed Reality solutions, the role of experience designers is essential. User experience consists of three types of interactions:

→ between users and the environment

→ users and virtual objects

→ virtual objects and the environment.

Nailing all of this allows creating immersive user experiences that keep users engaged.

How To Create A Good Pointer?

It might seem like a pointer is something that would require zero effort to design. We have to keep in mind, however, that everything we show to a user in MR has to be physically placed in the 3D space. It turned out not to be as easy as using mouse pointer or a finger in touch-based interfaces.

First, let's recall what user input we have available. In the case of the glasses we were designing for, we only know about the position and rotation of the user's head (glasses), a live video of what they see, and one action button.

Secondly, let's discuss what do we want from our pointer. Its main goal is to show the user where they can place a new emblem. It has to be very precise because the game result depends on that. We also want to convey other information using the pointer, like where the game is happening when it's not visible (eg. user is facing the other way) or when the user has recently placed an emblem and has to wait for the cooldown to finish.

Let's start with a simple exercise:

Point your hand in front of you with your thumb facing upwards. Imagine it is your pointer, try looking at different things in your vicinity while keeping the thumb int the middle of your vision. You can notice a few phenomena: like the thumb doubling and being blurry when you look at something at a different distance. This is a good simulation of what would happen if we placed a dot pointer in front of the user's face. We can try tweaking the parameters like dot size and distance from the user but it doesn't solve the fundamental problem of feeling out of place.

The solution to the problem wass fairly simple: instead of showing a simple dot in front of the user, we visualize the action they can take while looking a certain way. Whey they look at a place that would allow them to place an emblem, we show them a preview of this emblem (slightly transparent and different color). When they face away from the game, we show them an arrow pointing to the center of the game. We fallback to the dot, only when they are looking at nothing interesting, to gently highlight the fact that everything is working as intended. When designing for more experienced users, this wouldn't be necessary.

A thing we noticed when prototyping the pointer is that improperly positioned MR elements (like a pointer too close or too far) can cause physical discomforts like eye aches and headaches, so we have to be extra careful when designing MR experiences.

How To Create A Non-interfering 2D User Interface?

When playing the game, we sometimes have to give users some additional pieces of information. For example, we want to tell the user when a game is starting or that they have won the game. The pop culture wants to convince us, through movies like Iron Man, that displaying a Head-up Display (that's any transparent display that presents data without requiring users to look away from their usual viewpoints) in Mixed Reality is the way to go. Sadly, we have to remember that everything we show the users has to be located in 3D space, even if the object itself is flat, like text.

We, as creators of MR solutions, carry the burden of choosing how we want to display the text. There are two basic methods of accomplishing that: anchoring the notice to the user's head or the environment. The former approach appears more appealing but looks weird in practice. Presenting text this way may be very uncomfortable to the user (if the font is too large or small, or the text is too close to the user's eyes). Even if we adjust the settings properly (which can be perceived differently by different people), we still break the immersion because the text feels out of place.

So what to do, then? During one of the brainstorming sessions we got inspired by basketball games and how textual information is presented to the audience.

At any given moment, fans can look away from the game and focus on the information given on this display.

We decided to use this mechanism to replicate the experience of game as a central part of the experience with 2D informations shaped in a form of arena around the player. Here is how ot looks in Unity:

Anchoring the notices around the game center makes them feel more as a part of the game. However, this approach is not perfect because the notices could appear "behind" walls, but we knew our game would most likely be played in an open space where an overlap of the XO arena with real walls is unlikely.

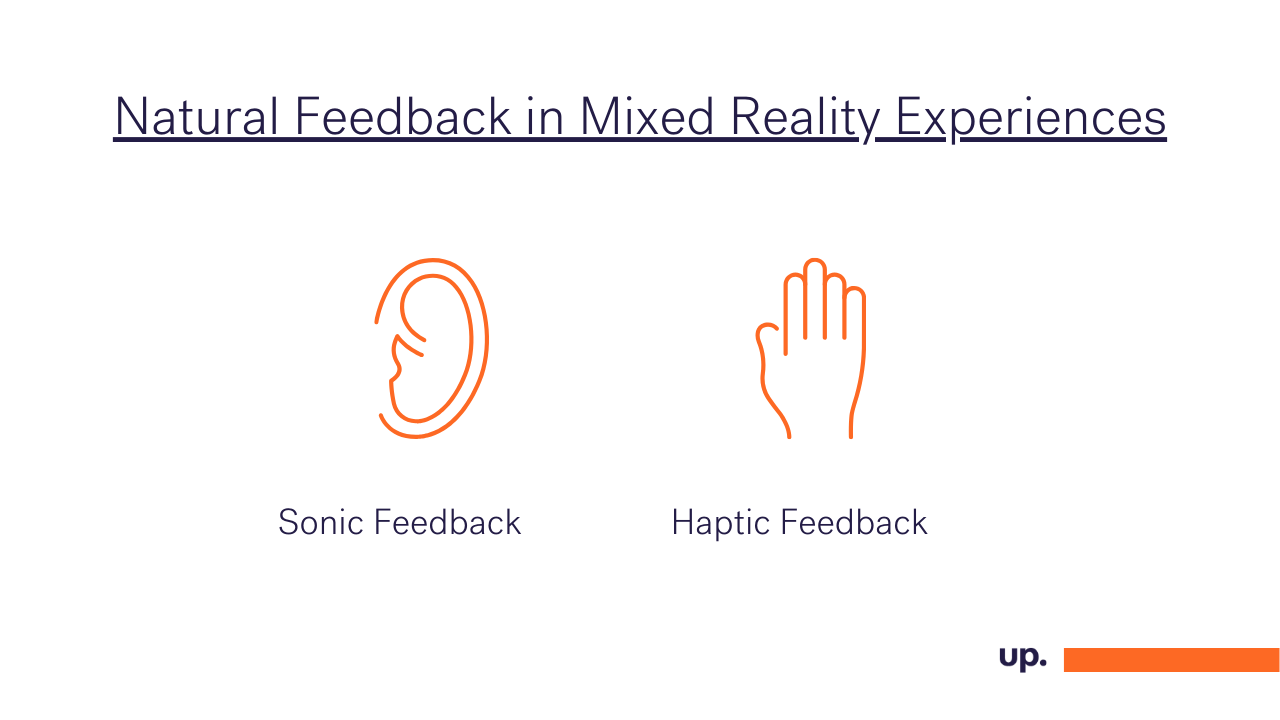

How To Provide Feedback To The Players?

When we interact with the real world, vision is not the only thing we use. We have a multitude of other senses which help us discover the environment around us. We can't fully take advantage of that when designing desktop or mobile apps, but MR glasses create a whole new realm of possibilities.

How can we let the player know their opponent has placed an emblem and tried to block them? We can show them the emblem, but we can also indicate that with a sound. When we hear a sound, we immediately know the direction from which it is coming. We can accomplish the same effect using MR glasses and their built-in headphones. Because we know the location of the player's head and the placed emblem, we can simulate the effect very accurately.

Depending on the MR device, we could also use haptic feedback to simulate the feeling of touch. The main thing to remember is to know what is possible and trying to mimic what we know from our interactions with the real world.

Lessons From Designing Mixed Reality solutions

The experience of designing Mixed reality solution working on both mobile and glasses taught us that the problems that were already solved on 2D interfaces are still to be solved on 3D interfaces.

1. The key to creating good UX is keeping users immersed in Mixed Reality.

We should make sure that virtual objects that users see make sense. We don't want objects to seem out of place or physically impossible. A virtual shelf anchored to the wall is fine, but a virtual shelf that is visible through a wall immediately breaks the immersion.

2. The real environment is a part of user experience and has to be taken into account when designing.

The environment can impact a lot of things, from how we should anchor virtual objects to what colors we should use when displaying them. Because of how the overlay is rendered on the MR glasses (light projected on glass), we cannot create objects darker than what the user sees.

3. MR technology is still very experimental, and the same design can look and feel differently on various devices.

Because the technology is still in early development stages, we can see small differences in what users perceive between different devices, even of the same model. For example, we experienced that some objects that were perfectly visible on one pair of glasses were almost invisible on another one. Because of that, it is important to test your products using many different devices.

Explore More Blog Posts

Field notes from HIMSS Europe 2026 in Copenhagen

My smartwatch knows more about my body on an average Tuesday than my hospital does. It tracks how I slept, how hard my heart worked on a run, whether I am trending in the wrong direction before I feel anything at all. The institution with the doctors, the scanners and a century of clinical knowledge mostly meets me when something has already gone wrong.

Announcing Enthusiast 1.4: AI Agents Meet E-commerce Workflows

We tend to think of AI as operating on clean, structured data. E-commerce teams know better: most of their information comes as PDFs from vendors, spreadsheets with inconsistent formatting, or scanned purchase notes from clients. Embedding these unstructured sources into automated workflows has long been a challenge. With the latest release of Enthusiast 1.4, we’re closing that gap.

The AI Design Gap: Moving Beyond One-Way Generation

There are plenty of tools capable of generating code from designs or directly from prompts. In theory, this looks like a dream scenario. It drastically shortens the journey from design to frontend development. The handoff is quicker, the design is easier to implement, and everyone involved is happier. Right?